Amazon: AI-powered org planning

Enterprise · Agentic AI · 0–1

5-min demo: How I prototype AI interactions

Below is a quick walkthrough of my workflow – how I use coding agent to scaffold architecture, test interaction patterns and validate AI behaviors before iterating with engineering and product partners.

Timestamps:

0:00 – 1:56, Workflow overview: how I scaffold simplified but realistic architecture

1:56 onward, Live prototype walkthrough: data drill-down and navigation pattern demo

Problem: executives flying blind in manual, slow, and fragmented org planning cycle

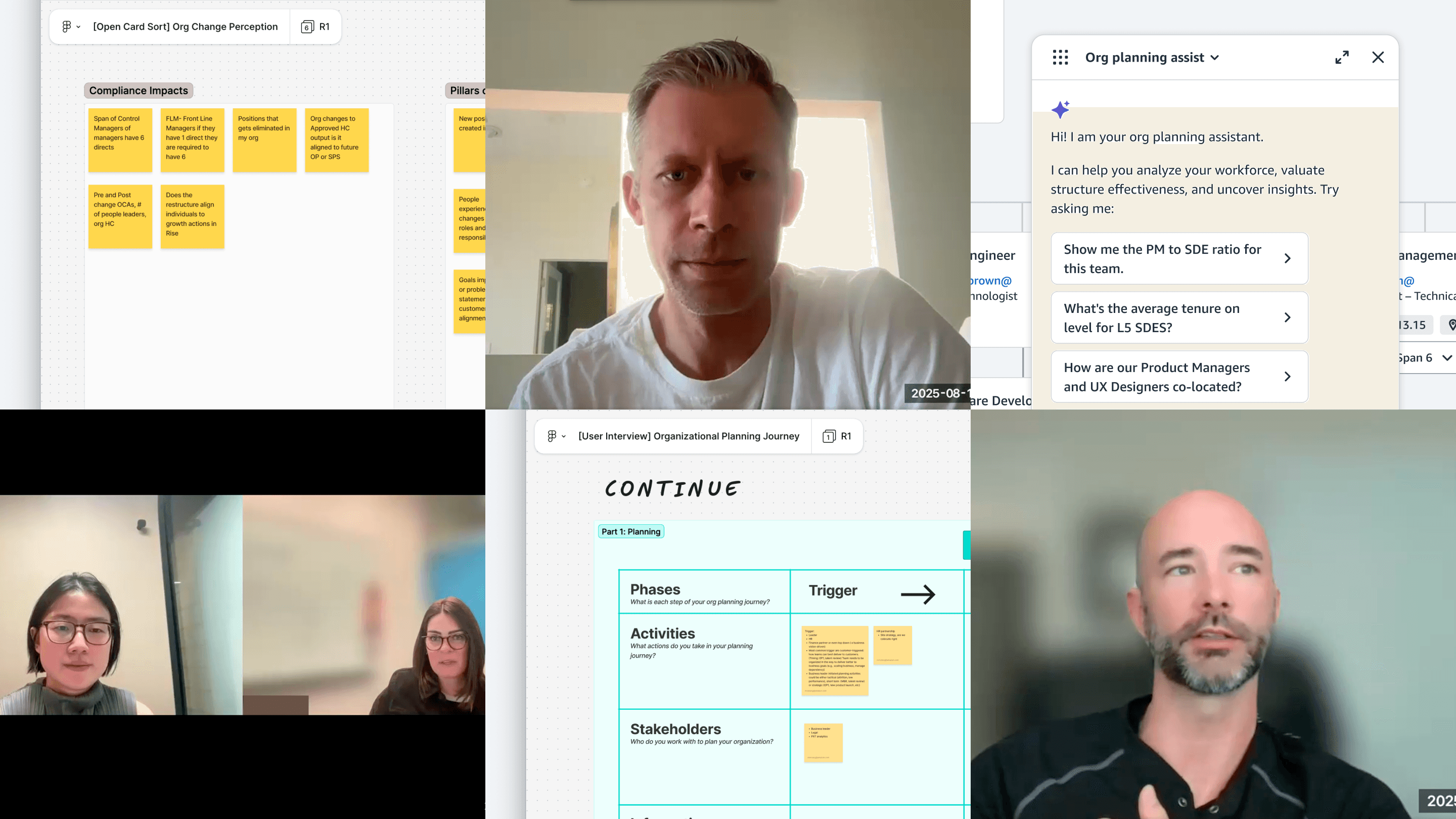

Three research streams to define where AI belongs

I ran three research streams: contextual inquiry to understand current workflows, card sorting to map how people think about org changes, and concept testing to validate AI interaction models.

Interviews with HRBPs and leaders

Research findings that shaped AI boundaries

1.

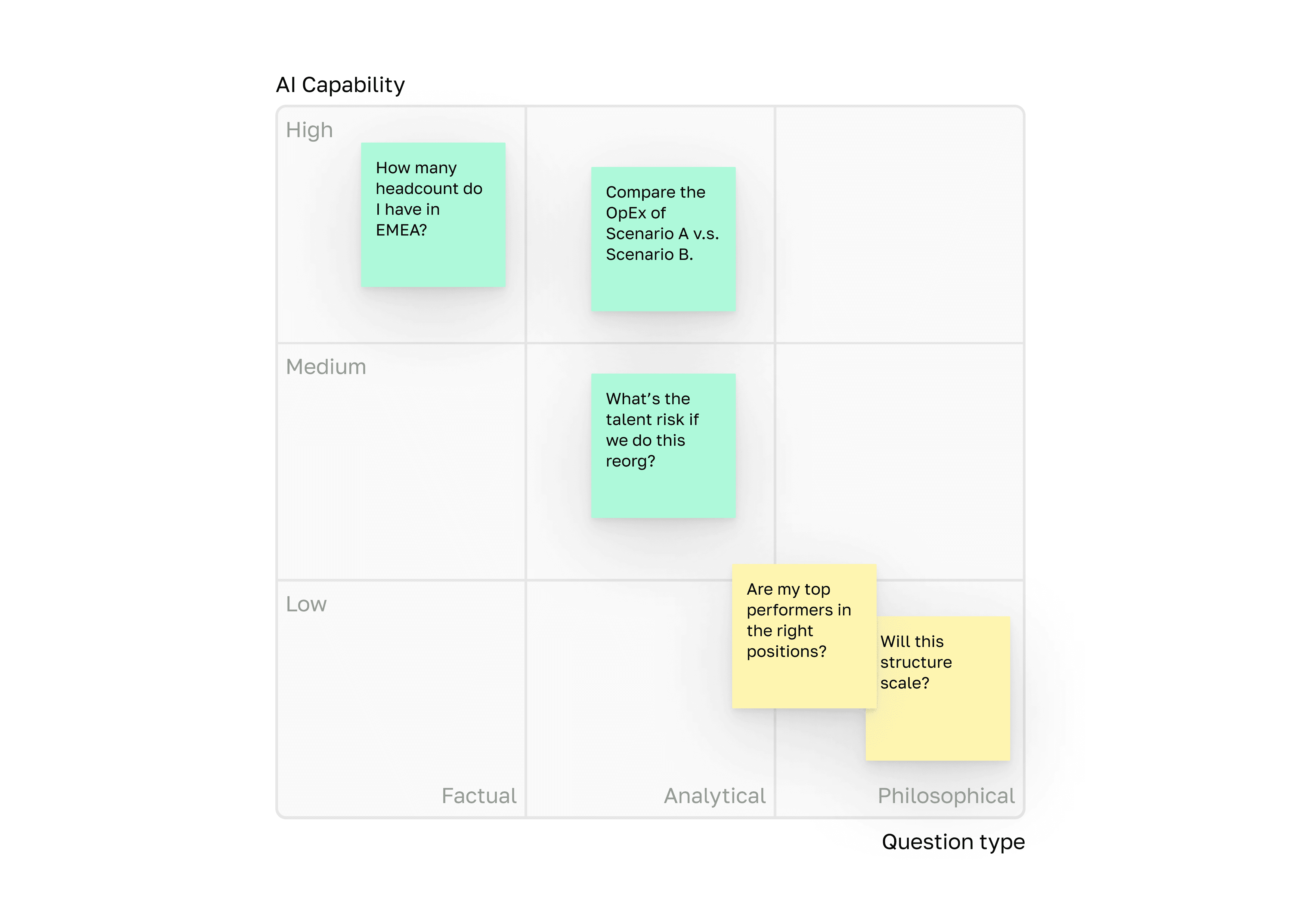

HRBPs maintain shadow spreadsheets to manually calculate metrics no system provides: manager ratios, role composition, co-location rates.

Design implication: The data model must compute core planning metrics natively.

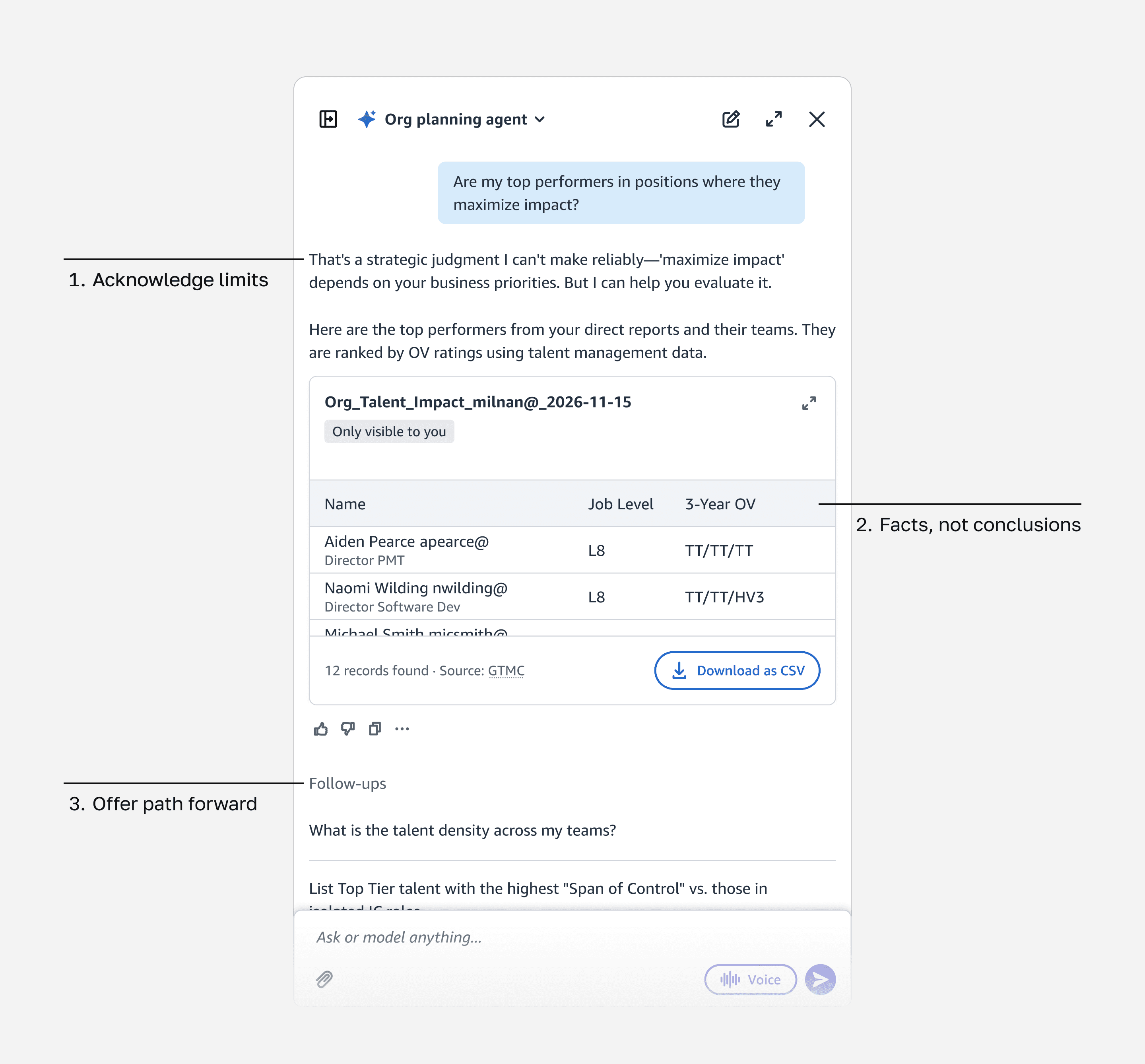

Mapping of question types to AI capability

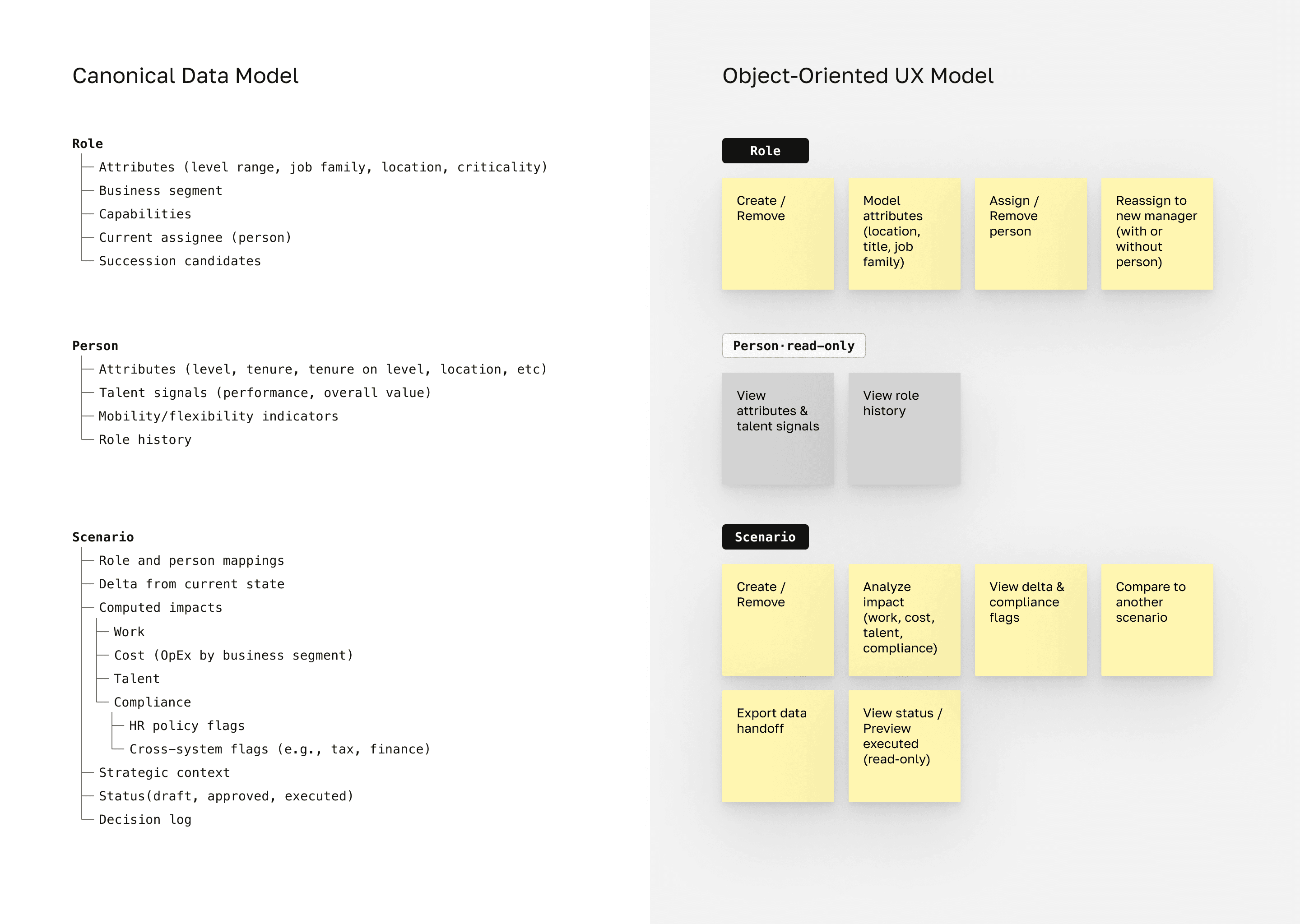

Designing the data model AI reasons over

Simplified model. The actual schema is more complex, reflecting legacy system constraints and ongoing migrations.

How should AI act when it receives questions it can't answer reliably?

The most important pattern I designed was the AI Pivot pattern: what happens when users ask questions AI can't reliably answer.

For example, if a user asks, "Are my top performers in roles where they make the most impact?" the AI won't attempt to answer directly. Instead, it acknowledges the question requires judgment, surfaces relevant data, and lets the user draw their own conclusion.

Design principles:

Acknowledge the question's nature: Don't pretend the question can be answered if it can’t

Provide user with the facts, not conclusions: This way, they can make their own judgement

Offer paths forward: Suggest what AI can assist with next

Trust comes from honesty about limits, not from having all the answers.

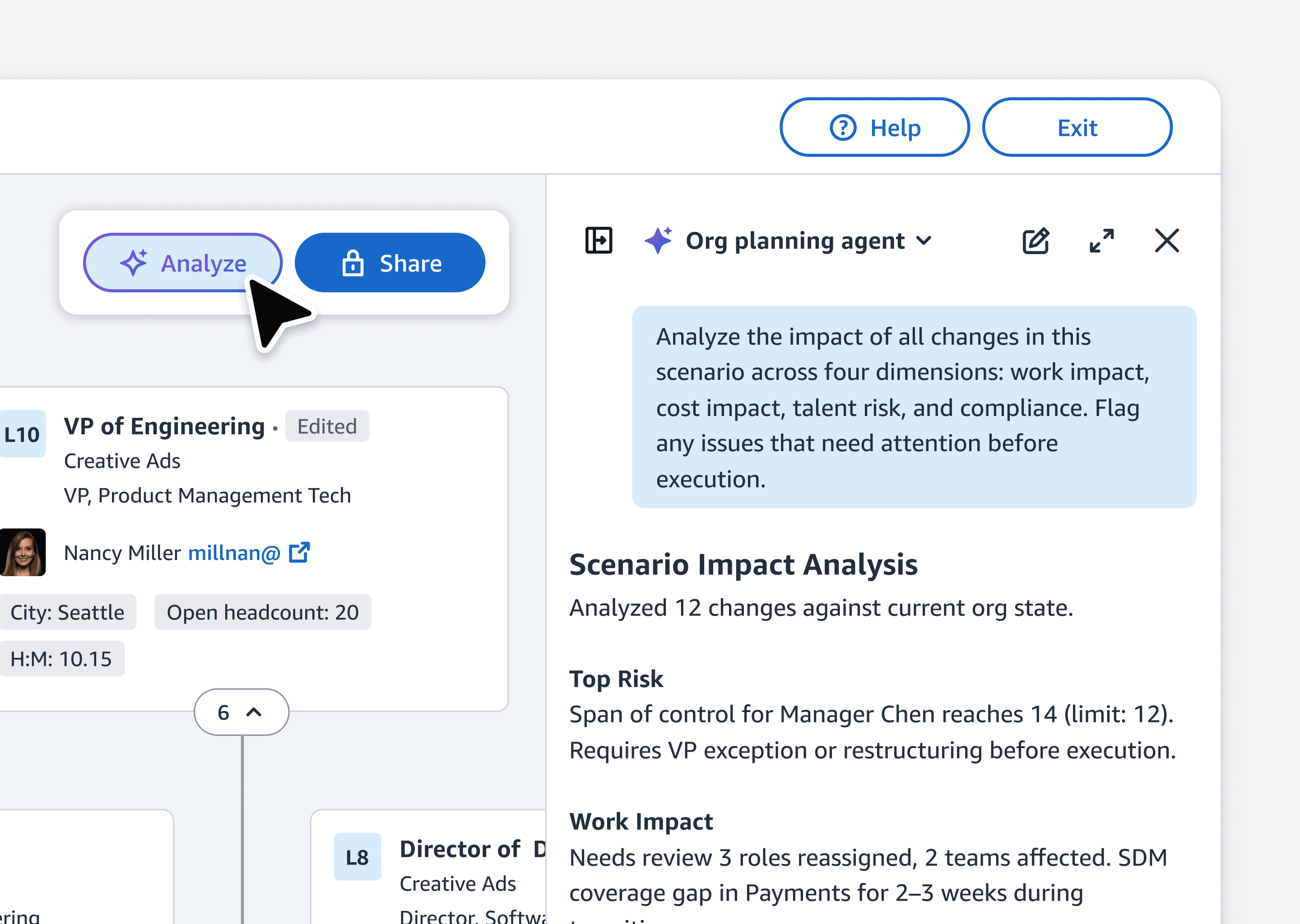

Trading off real-time for on-demand AI analysis

•

Original design

As users drag roles and change assignments, the system shows compliance risks, ratio impacts, and cost changes in real time.

•

What we shipped

Users build scenarios freely, then invoke AI analysis when ready.

•

Why we shifted

Real-time alerts that don't match what users want create noise. No one wants a Clippy announcing findings mid-thought. For v1, we made analysis on-demand.

•

What we preserved

Analysis structured around four pillars. We aligned to revisit real-time if a strong business case emerges.

On-demand analysis: users click Analyze when ready

Reduced decision-making time with the MLP rollout

User feedback after rollout